Sora vs. Veo vs. PixVerse: A 2026 Pro Guide to AI Video Stacks

Sora 2 shut down in March 2026. Compare it with Veo 3.1 and PixVerse on video generation, real-time interactive worlds, and automated ad creation.

Sora 2 went offline on March 24, 2026. OpenAI cited compute costs and regulatory pressure. Both contributed to what made it a category benchmark and, eventually, unsustainable. Three weeks later, PixVerse V6 launched. Google had already shipped Veo 3.1 in October 2025.

In six months, the AI video stack reshuffled. One tool fell off. Two others moved into production. This article covers all three: what Sora was capable of before it shut down, what Veo 3.1 does now, and what PixVerse actually delivers across its V6, R1, and Mini Apps product lines.

Quick answer: If you need a working AI video generator today, the practical choice is Veo 3.1 or PixVerse V6. Veo 3.1 fits teams already operating inside Google Cloud and Gemini workflows. PixVerse V6 fits teams that need longer single-pass clips, built-in multi-shot generation, and more control over cinematic output. If your evaluation goes beyond standard generation, PixVerse also extends into R1 for real-time worlds and Mini Apps for automated ad production.

Sora 2, Veo 3.1, and PixVerse V6 Comparison Table

All three models target the same job: turn a text prompt into a finished video with synchronized audio. The table below compares them on the specs that matter most when choosing a generation tool for creative or production work. Enterprise integration, API access, and deployment cases are covered in each model’s dedicated section further down.

| Sora 2 | Veo 3.1 | PixVerse V6 | |

|---|---|---|---|

| Developer | OpenAI | PixVerse | |

| Status | ⛔ Offline since March 24, 2026 | ✅ Active | ✅ Active (launched March 30, 2026) |

| Max resolution | 1080p (Pro tier) | 720p / 1080p / 4K | 1080p |

| Single-pass duration | Up to 12s | 8s | Up to 15s |

| Multi-shot engine | Manual prompting | Sequential extension | Built-in |

| Native audio | Synchronized speech, sfx | Dialogue, sfx, ambience | Simultaneous with motion |

| Text-in-video | Limited | Limited | Multilingual, motion-stable |

| Cinematic controls | Basic | Basic | 20+ lens parameters |

| Free daily credits | None (Pro $200/mo) | Paid API | Yes |

| Developer / API access | API roadmap (now offline) | Gemini API, Vertex AI | CLI + API, agent-compatible |

Sora 2 set the physics benchmark but is no longer available. Veo 3.1 leads on resolution options and ecosystem reach within Google Cloud. PixVerse V6 holds the longest single-pass duration, the most granular cinematic controls, and the only built-in multi-shot engine of the three. With Sora offline, the practical choice narrows to Veo 3.1 and PixVerse V6 — and PixVerse extends further with R1 and Mini Apps, which we cover in detail below.

Which AI Video Tool Should You Choose in 2026?

If your goal is a standard text-to-video workflow, the real comparison is no longer Sora versus Veo versus PixVerse in equal terms. Sora 2 is part of the historical benchmark, but the active buying decision is between Veo 3.1 and PixVerse V6.

Choose Veo 3.1 if your team already runs inside Google Cloud, needs Gemini or Vertex AI integration, and values 4K options plus a familiar enterprise stack more than longer single-pass output.

Choose PixVerse V6 if you need up to 15 seconds in one pass, built-in multi-shot generation, stronger cinematic controls, and a workflow that can move from testing to production without stitching together multiple scene extensions.

Choose PixVerse R1 if your use case is not a finished video file but a live, interactive world that responds to users in real time. That is a different product category from both Sora 2 and Veo 3.1.

Choose PixVerse Mini Apps if your real job is automated ad creation from product assets rather than prompt-based filmmaking. In that case, the relevant comparison is against traditional ad production workflows, not only against general video generators.

Side-by-Side Output Test: 3 AI Video Generators Compared

Specs describe potential. The same prompt run across all three tools shows how each model actually behaves under pressure.

Test prompt:

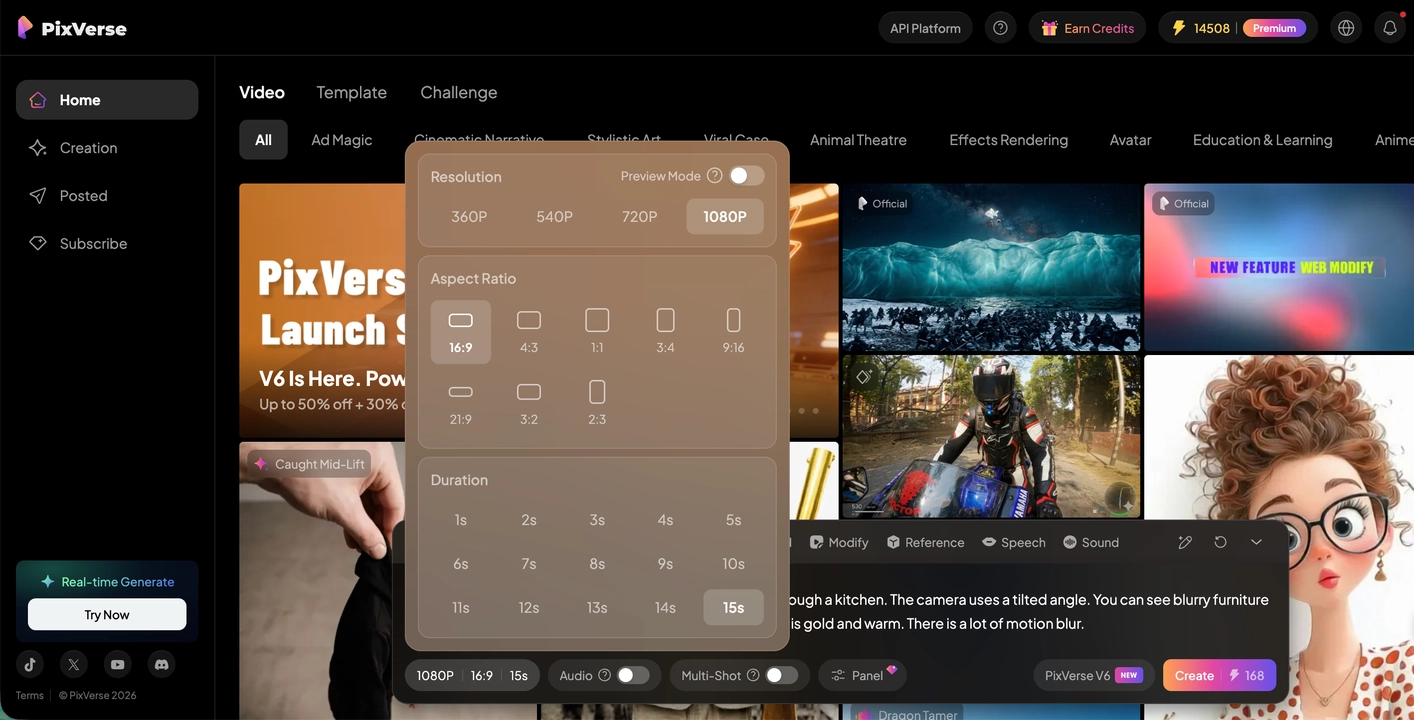

A realistic close up of a bee flying very fast through a kitchen. The camera uses a tilted angle. You can see blurry furniture and a broken honey jar on a table. The lighting is gold and warm. There is a lot of motion blur.

The prompt was chosen to stress three things at once: fast subject motion, fine material detail (glass, honey, metal surfaces), and fisheye spatial geometry. We scored each output on spatial consistency, temporal stability, and native audio accuracy.

Sora 2

The kitchen read beautifully. Warm grade, cinematic depth, strong ambient light that felt considered rather than procedural. Where Sora 2 fell short was prompt fidelity on the hero subject. The room took priority; the bee was present but underweighted. Prompting “very fast” produced a normal-speed drift in most generations. The cybernetic detail we specified on the bee did not register reliably. Getting one commercially usable take required repeated regenerations, which at $200/month adds up quickly. Sora 2 remained a reference for environmental storytelling; for subject-driven motion, it left work on the table.

Veo 3.1

Color and sharpness landed well. The kitchen scene had clean geometry and accurate material response on flat surfaces. Where Veo 3.1 missed was motion fidelity: the “very fast” instruction produced a slow drift, not flight. Playback also showed noticeable stutter in our output file. Audio was present and included ambient kitchen tone, but the sync to on-screen motion felt approximate rather than locked. For a prompt that leans heavily on speed and energy, Veo 3.1 delivered a competent but visually passive result.

PixVerse V6

Fisheye geometry held through the full pass. As the bee moved around appliances, the lens distortion tracked the subject position frame by frame without drifting. The amber honey in the broken jar showed plausible viscosity and light refraction as the camera pushed past it. Wing-speed audio was generated within the same pass as the video, and the buzz tracked the flight arc from entry to exit without a separate sync step. The cut from wide kitchen to tight macro on the honey jar read as a continuous move, not a stitch. Temporal stability held at 1080p across the full 15 seconds.

For full video output from each tool and the extended benchmark across 10 models, see the 2026 AI Video Generator.

OpenAI Sora 2

Sora 2 AI video generator is OpenAI’s AI video and audio generation model, built to simulate physical consequence rather than interpolate visually plausible frames. In Sora 2, if a basketball player misses a shot, the ball rebounds off the backboard. In earlier models, it might teleport to the hoop. Modeling failure honestly is what separated Sora from everything before it.

Capabilities

Sora 2 launched on September 30, 2025 as a general-purpose video and audio system. It supported up to 12 seconds per generation at 1080p on the Pro tier. Complex motion, from triple axels to paddleboard backflips and multi-character dialogue, was modeled with more physical integrity than any competing tool at the time. Audio was native: synchronized speech, sound effects, and ambience in one pass.

The Characters feature let users insert a real person into a generated scene with accurate likeness and voice, after a one-time identity and consent verification. Multi-shot coherence was also strong. Sora 2 could follow instructions across multiple cuts while keeping the same environment, lighting, and objects consistent.

Limitations

Non-deterministic output was the consistent complaint. Even with precise prompts, character details drifted. Hands were unreliable. Getting a specific commercial result took many regenerations. The Pro tier ran at $200/month, and the cost-per-minute of usable output was high enough to exclude most independent work.

Shutdown

OpenAI pulled the Sora app and API on March 24, 2026. Compute overhead and regulatory scrutiny over synthetic media were the stated reasons. Sora 2 has no active public endpoint as of this writing.

The shutdown forced immediate migration for teams that had built on the Sora API. If you need a working tool now, see our Sora alternatives guide. The physics simulation standard Sora established remains the reference point every model that followed is compared against.

Google Veo 3.1

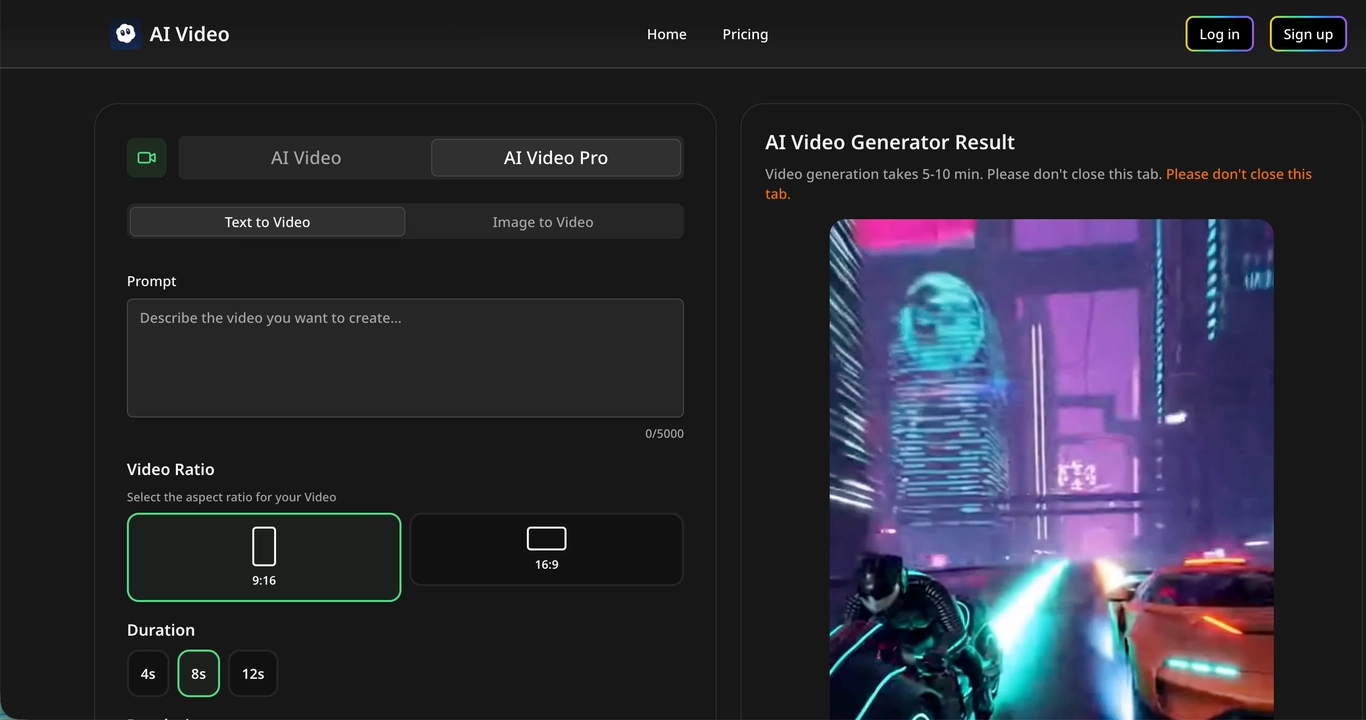

Veo 3.1 is Google’s generative video model, available through the Gemini API since October 2025 and accessible via Vertex AI, Google AI Studio, Flow, and the Gemini app.

Capabilities

The model supports 720p, 1080p, and 4K with 16:9 and 9:16 aspect ratios. Default clip length is 8 seconds. Scene extension lets you chain clips into sequences longer than a minute, with each new clip picking up from the final second of the previous one.

Audio improved substantially from earlier Veo versions. Dialogue, sound effects, and ambience are generated in the same pass as the video.

Ingredients to Video takes up to three reference images and anchors character identity or scene style across multiple generations. This reduces prompt iteration for brands or productions that have established visual assets.

First and last frame control lets you specify a starting and ending image, and Veo 3.1 generates the transition between them, with audio.

Access

Veo 3.1 is available through the Gemini API (Google AI Studio, Vertex AI) and consumer surfaces including the Gemini app, Flow, and YouTube Shorts. Enterprise access through Vertex AI includes data governance controls and SLA structures. Teams already on Google Cloud have the shortest integration path.

Limitations

Default 8-second clips are short. Longer narrative content requires deliberate sequential prompting via scene extension, which is different from a single-pass generation with built-in multi-shot logic. Teams outside the Google Cloud ecosystem take on real integration overhead.

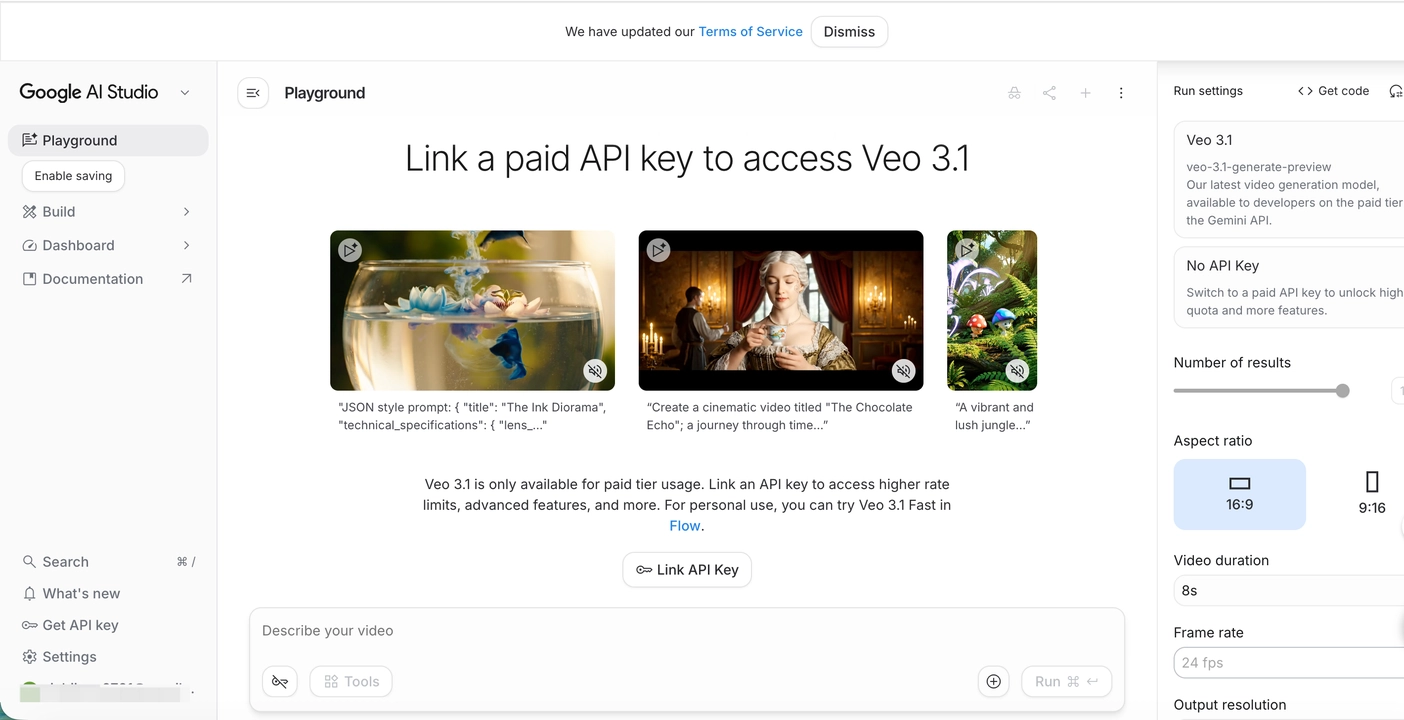

PixVerse

Sora and Veo are single-model products. PixVerse ships three: V6 for cinematic video generation, R1 for real-time interactive worlds, and Mini Apps for automated commercial video production. Each addresses a different stage of the content pipeline.

For a like-for-like comparison with Sora 2 and Veo 3.1, PixVerse V6 is the direct match. R1 and Mini Apps matter when the decision expands beyond standard prompt-to-video generation into real-time experiences or automated commercial workflows.

PixVerse V6

PixVerse V6 is one of the best AI video generators, released on March 30, 2026 to generate long, coherent clips in a single pass without stitching.

Built on a Diffusion Transformer architecture, V6 generates up to 15 seconds at 1080p in a single pass. Most models at 1080p fragment around the 5-8 second mark. V6 holds temporal stability across the full generation more consistently.

Native audio is generated simultaneously with the video, not layered afterward. The multi-shot engine handles scene transitions with shared world state, so a wide establishing shot can cut to a tight macro without lighting or materials drifting between cuts.

Text-in-video supports multilingual rendering. On-screen text holds character shapes through motion, removing a persistent constraint for brands running localized campaigns.

V6 ships 20+ cinematic lens parameters: focal length, aperture, depth of field, chromatic aberration, and vignetting. Specifying these before generation gives directors input that goes beyond a generic style toggle.

Where V6 separates from earlier PixVerse models is in physical simulation. Skin texture, muscle tension during movement, gravity, viscosity, and elasticity all track more believably. Combat sequences land with visible impact. Special-effect shots like bullet time, time-lapse, and rack focus now succeed at a high rate without heavy prompt engineering.

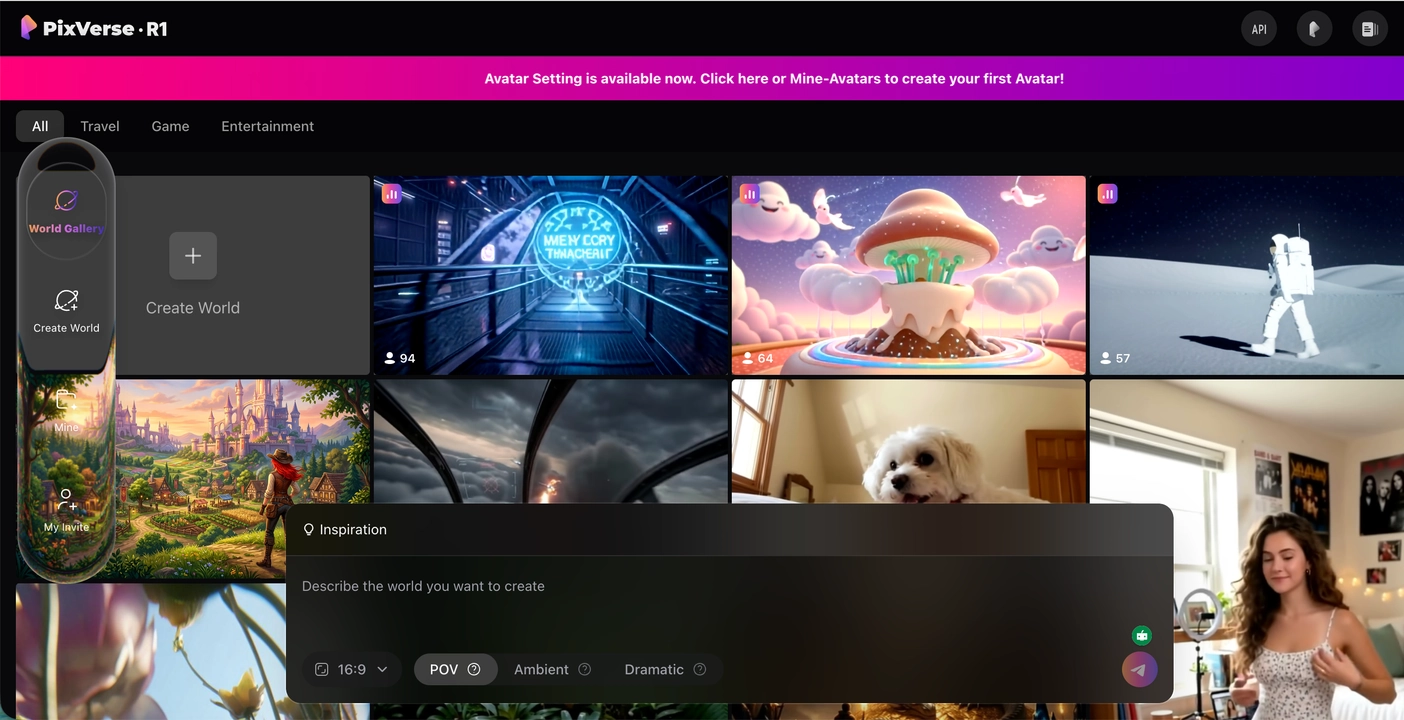

PixVerse R1

PixVerse R1 is PixVerse’s real-time world model, first launched in January 2026 and updated in April 2026 with multi-user shared worlds. Where V6 produces a finished video file, R1 generates a persistent, interactive visual environment that responds to user input in real time.

The technical foundation combines three components: an Omni Native Multimodal Foundation Model that processes text, image, video, and audio as a unified token stream; a Consistency-aware Autoregressive Framework that maintains temporal coherence across long horizons; and an Instantaneous Response Engine that achieves millisecond-level latency at 1080p.

The April 2026 update introduced Shared Worlds: continuous, 24/7 interactive livestreams where multiple users submit prompts into a common feed. The AI picks up prompts and generates the corresponding visual content live, while a built-in chat layer lets viewers interact alongside the stream. It functions closer to a multiplayer Twitch channel than a generation tool.

Personalized Avatars let users upload photos (front, side, back) to create a digital representation that moves, animates, and travels across different worlds.

Note: R1 Shared Worlds and Personalized Avatars are now live and free to access at realtime.pixverse.ai. Additional R1 features, including expanded world types and deeper avatar customization, are in active development.

No competing platform from OpenAI or Google currently offers a comparable real-time, interactive world generation product. R1 occupies a category that Sora 2 and Veo 3.1 do not address.

Mini Apps

Mini Apps is PixVerse’s suite of scenario-specific tools built on top of its generation models, designed to compress full production workflows into single-step operations.

The first Mini App, Ad Master, is an AI ad video generator launched on March 31, 2026. It takes a product image and a short description, then generates a complete advertising video with scene composition, model matching, voiceover, and subtitles.

For e-commerce teams and small businesses that cannot justify a production pipeline for every SKU, Ad Master removes the gap between having a product photo and having a shippable video ad.

Note: Ad Master is live at app.pixverse.ai/mini-apps. Additional Mini Apps covering visual storytelling, short-form content, and audio are in development. The Mini Apps suite is expected to expand throughout 2026.

PixVerse models at a glance

| V6 | R1 | Mini Apps (Ad Master) | |

|---|---|---|---|

| Purpose | Cinematic video generation | Real-time interactive worlds | Automated commercial video |

| Output | Finished video file (up to 15s 1080p) | Persistent live visual stream (1080p) | Complete ad video with voiceover |

| Input | Text prompt or reference image | Text prompt (live, multi-user) | Product image + description |

| Audio | Native, synchronized with motion | Real-time ambient generation | Auto-generated voiceover + subtitles |

| Interaction | Generate, review, iterate | Real-time, shared, continuous | One-step automated |

| Best for | Filmmakers, agencies, developers | Community engagement, interactive experiences | E-commerce, small business, performance marketing |

| Pricing | Free daily credits + subscription tiers | Free access | ~$3/video ($2 for subscribers) |

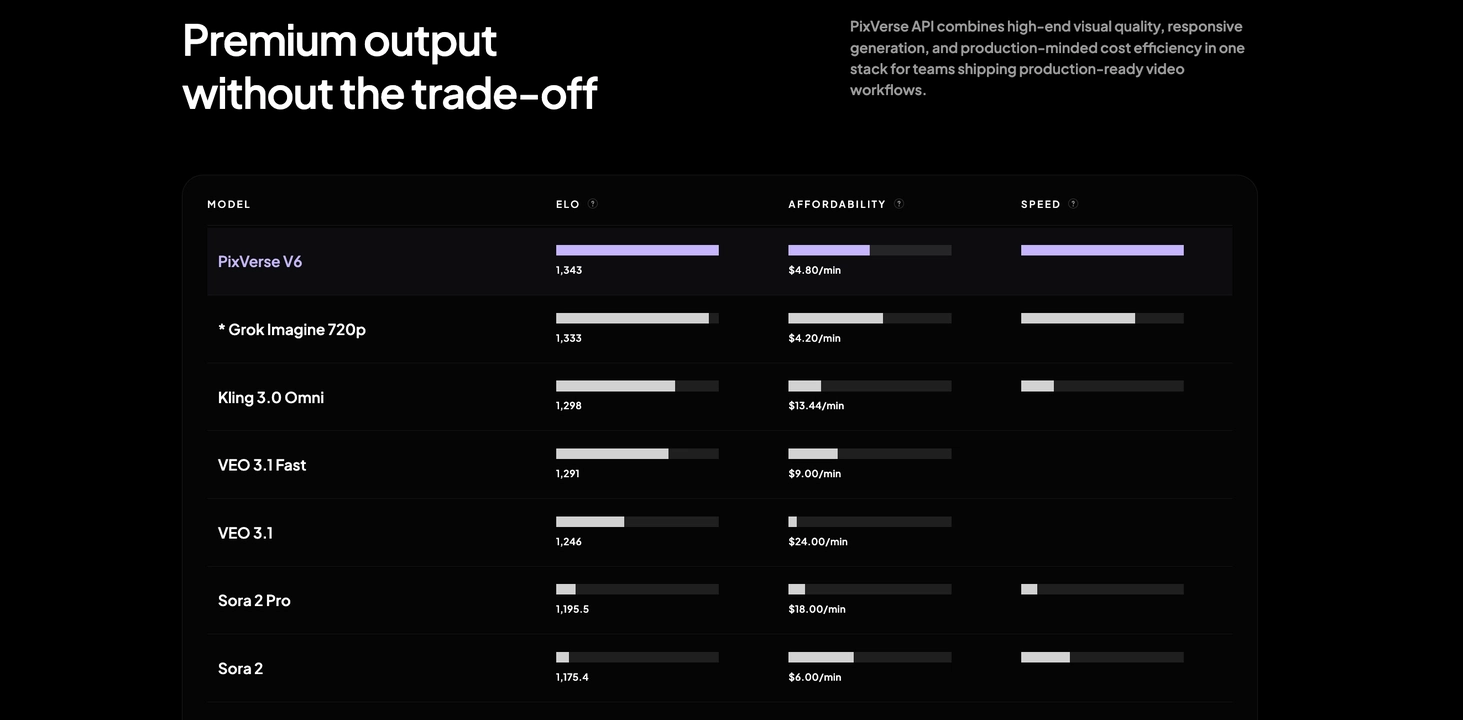

Benchmarks

The leaderboard ranks models by ELO score, affordability, and speed. PixVerse V6 leads with an ELO of 1,343 at $4.80/min. Veo 3.1 Fast scores 1,291 at $9.00/min; standard Veo 3.1 sits at 1,246 with a higher cost of $24.00/min. Sora 2 Pro lands at 1,195.5 at $18.00/min, and standard Sora 2 at 1,175.4 at $6.00/min. PixVerse V6 also leads the speed column by a visible margin. Among the three platforms compared in this article, PixVerse V6 ranks highest on quality, costs less per minute than both Veo 3.1 tiers and Sora 2 Pro, and generates faster.

Enterprise cases

Runware is a global AI inference platform serving 200,000+ developers through a unified API for image, video, and audio generation. When the company expanded into video, they needed a model that would run at infrastructure pricing with sub-second inference latency and hold up under multi-model API compatibility requirements. PixVerse V6 cleared that bar. Runware embedded PixVerse into their API stack via the Sonic Inference Engine, enabling text-to-video and image-to-video at $0.29 per generation, a 62% reduction from market rate, with sub-second model loading. For Runware’s developer clients, production-quality video is now callable through the same API they already use for images, without a separate integration or pricing tier.

Perfect Corp (NYSE: PERF) is the company behind the YouCam app suite, with 1.1 billion downloads and beauty tools serving L’Oréal, Estée Lauder, Tom Ford, and 400+ premium brands across 67 countries. The company needed a video generation layer to power AI avatar creation, beauty effect visualization, and product content automation inside YouCam’s interface. PixVerse’s API was integrated into the YouCam Online Editor: users upload a photo or type a prompt, and PixVerse handles the video generation within the YouCam workspace. Consumers can go from a product photo to a finished, shareable video demonstrating a beauty look without leaving the app. For brands like L’Oréal, this delivers product content with the visual quality needed for global retail and e-commerce, produced at the pace of a digital campaign.

Developer access

V6 and Mini Apps are available through the web platform. V6 also ships a CLI that integrates with coding agents including Claude Code, Codex, and Cursor, supporting multi-model access to PixVerse V6, Veo 3.1, and Grok from a single npm install. Production pipelines can call the PixVerse API as an automated step without a separate GUI workflow. See the PixVerse CLI guide for setup.

R1 is accessible at realtime.pixverse.ai. API access for R1 is available through the PixVerse R1 Partner Program.

Commercial use and operational fit

For teams evaluating these tools for paid production, the decision is not only about output quality. It is also about access path, pricing model, iteration cost, deployment workflow, and whether the product maps cleanly to the job you actually need done.

Veo 3.1 is strongest when procurement, governance, and deployment already sit inside Google’s stack. PixVerse V6 is stronger when the bottleneck is longer coherent output, cinematic control, or lower-friction iteration from prompt to finished clip. PixVerse R1 and Mini Apps become relevant when the commercial requirement is real-time audience interaction or product-to-ad automation rather than general video generation. In all cases, teams should confirm the latest commercial use, moderation, and data handling terms directly with the platform they plan to ship on.

Where each tool fits

Short-form social clips: Veo 3.1’s 8-second output and vertical 9:16 support cover most social content needs with minimal prompting overhead. PixVerse V6 handles the same formats at 15 seconds for content that needs more story room. Sora 2 is offline.

Campaign hero video: When the asset needs 12-15 seconds with product-consistent lighting across a sequence of shots, V6’s single-pass length and built-in multi-shot logic reduce the iteration cost compared to Veo’s sequential extension approach. Both produce professional output; the difference is how much manual prompting sits between shots.

Multi-shot narrative: Veo 3.1’s scene extension and reference image support handle longer sequences. V6’s multi-shot engine manages character-consistent cuts within a single generation and requires fewer stitching iterations for structured narrative.

High-volume automated production: V6 via the PixVerse CLI and API, deployed at scale through Runware’s developer platform, fits teams that need video generation inside automated pipelines. Veo 3.1 via Vertex AI fits teams already in Google Cloud. Sora 2’s API is offline.

E-commerce and product ads: PixVerse Mini Apps (Ad Master) is purpose-built for this. Upload a product image, get a finished ad video with voiceover and subtitles for $2-3. Neither Sora 2 nor Veo 3.1 offers a single-step product-to-ad pipeline.

Interactive experiences and community engagement: PixVerse R1’s shared worlds create a new format entirely: multi-user, real-time, persistent generation. There is no direct comparison to Sora or Veo here. The closest analog is a live Twitch stream where the content is AI-generated from audience prompts.

Beauty, retail, and product visualization: V6’s photorealistic rendering, stable face mapping, and multilingual text-in-video are the technical foundation for how Perfect Corp built their YouCam video generation suite. Veo 3.1 handles similar visual quality but does not have a comparable enterprise reference deployment in this category.

FAQ

Is Sora still available?

As of March 24, 2026, OpenAI’s Sora app and API are offline. There is no active public endpoint for Sora 2.

How does Veo 3.1 compare to PixVerse V6 for longer content?

Veo 3.1’s default output is 8 seconds; scene extension pushes beyond a minute but requires sequential prompting. V6 generates up to 15 seconds in a single pass with multi-shot logic built in. V6 is better suited when the asset needs narrative structure across cuts; Veo 3.1 is faster for individual high-quality clips.

What is PixVerse R1?

R1 is PixVerse’s real-time world model. Instead of generating a finished video file, it creates a persistent, interactive visual environment that responds to user prompts in real time. The April 2026 update added multi-user shared worlds and personalized avatars. It is free to access at realtime.pixverse.ai.

Can I use these tools for commercial production?

Commercial use depends on each platform’s current product tier, API terms, moderation rules, and regional policies. Before shipping paid campaigns or client work, teams should verify the latest usage rights and data handling terms directly with OpenAI, Google, and PixVerse.

Which AI video generator should I test first?

For video generation, skip the demo prompts. Run a real brief through Veo 3.1 and PixVerse V6. Score each on audio sync accuracy, cross-shot consistency, and how many iterations it took to get something usable. For e-commerce, try Ad Master with a product photo and compare output time against your current workflow.

Conclusion

Sora 2 was technically the strongest video model of 2025. It is also offline. What it leaves behind is a physics simulation benchmark. Neither Veo 3.1 nor PixVerse V6 has fully matched its world coherence at peak performance.

Veo 3.1 is Google’s active answer: polished short-form video with native audio, tight ecosystem integration, and developer-grade API access. It fits teams that already operate in Google Cloud and need reliable 8-second outputs at scale.

PixVerse is the broader platform play. V6 handles the direct comparison layer with longer single-pass output and a built-in multi-shot engine. R1 introduces real-time, interactive world generation that neither Sora nor Veo attempted. Mini Apps compresses full ad production into a single upload. The Runware and Perfect Corp deployments show how V6 works at infrastructure scale; R1 and Mini Apps extend the platform into categories where the comparison is not Sora or Veo, but traditional production pipelines.

The active choice for standard video generation is between Veo 3.1 and PixVerse V6. For real-time interactive content or automated commercial video, PixVerse currently operates without a direct competitor from either OpenAI or Google.